Musician Versus Machine

How one cellist took on artificial intelligence, did battle with his holographic alter-ego, and found himself.

“Wouldn’t it be cool if we had a hologram cellist battling me onstage?”

It was March 2019, and composer Adam Schoenberg and I were spitballing ideas for a new cello concerto commission. I threw the idea out there half-jokingly. With a blank slate, Adam and I didn’t want to produce just another traditional work. We craved impact. Relevance. We had spent most of our careers tired of hearing that classical music was old, boring, elitist, and too hard to understand.

We wanted to shake things up with something modern and fresh—some- thing to attract new audiences, but not at the expense of the Beethoven super- fans who keep classical music afloat. That’s the challenge of classical music today. It’s not easy to produce a serious piece of music—one that moves you, sparks intellectual discussion, and has the artistic merit to sit on a program beside Mozart, Brahms, or Shostakovich, and yet is popular enough to fill thousands of seats and help keep orchestras out of deficit. But a hologram straight out of Star Wars and Blade Runner? That had to be too Hollywood for symphony hall.

Yves Dhar Photo: Lindsay Adler

Yves Dhar Photo: Lindsay Adler

Somehow, we couldn’t shake the idea. Adam and I are wired the same way. We both dream big and love to make the impossible possible. It’s why we clicked instantly as doctoral students at Juilliard nearly twenty years ago, and the reason I always saw us creating a major work together in the mold of, say, Rostropovich and Prokofiev. With this seemingly gimmicky idea of a hologram on stage, we doubled down and ran with it.

It had legs. The deeper we dug, the more meaning we uncovered behind human and hologram facing off in an orchestral arena. It’s old versus new, analog versus digital, acoustic versus electronic, stage versus film, live versus virtual, man versus machine. In a world filled with Siri, Alexa, chatbots, and automated call centers, we humans now depend on technology. And though much of classical music has resisted technology in favor of the acoustic purity of violins, clarinets, and French horns, we figured: why not explore this modern love-hate relation- ship in the concert hall? We had a solid concept in mind, but never in our wild- est dreams did we think it would gen- erate A.G.N.E.S., the machine-learning algorithm that composed the music played by my holographic rival in Automation: Concerto for Human and AI Cellists.

“I’ll be back.” —Cyberdyne Systems Model 101 in The Terminator

Artificial intelligence wasn’t really on the table in those early brainstorming sessions. Sure, Adam and I had bandied the notion about, while sprinkling in wisecracks about The Terminator being sent back in time from a dystopian future to kill and prevent us humans from creating a piece like Automation.

But how realistic was this idea, anyway? Would it fit in the limited budget I had fundraised for this project? We were already tasked with figuring out how to produce a hologram onstage; A.I.- generated composition seemed like one rung of the impossible too high to climb, even for us.

That all changed one day when Adam shared the human versus hologram narrative of Automation with his fellow Occidental College professor Justin Li. Justin, who teaches cognitive and computer science, casually slipped into the conversation, “You know, there are A.I. out there that not only can replicate your music, but can digest and learn it, then start to compose something new.”

Not only did this type of machine- learning algorithm exist, it was within our reach. Adam would go on to collaborate with Kathryn Leonard, chair of the computer science department at Occidental, and her partner, Ghassan Y. Sarkis, a professor of mathematics and statistics at Pomona College, who built A.G.N.E.S. (Automatic Generator Network for Excellent Songs) over the course of several months. By August 2021, A.G.N.E.S. was online and ready to accept data in the form of musical scores.

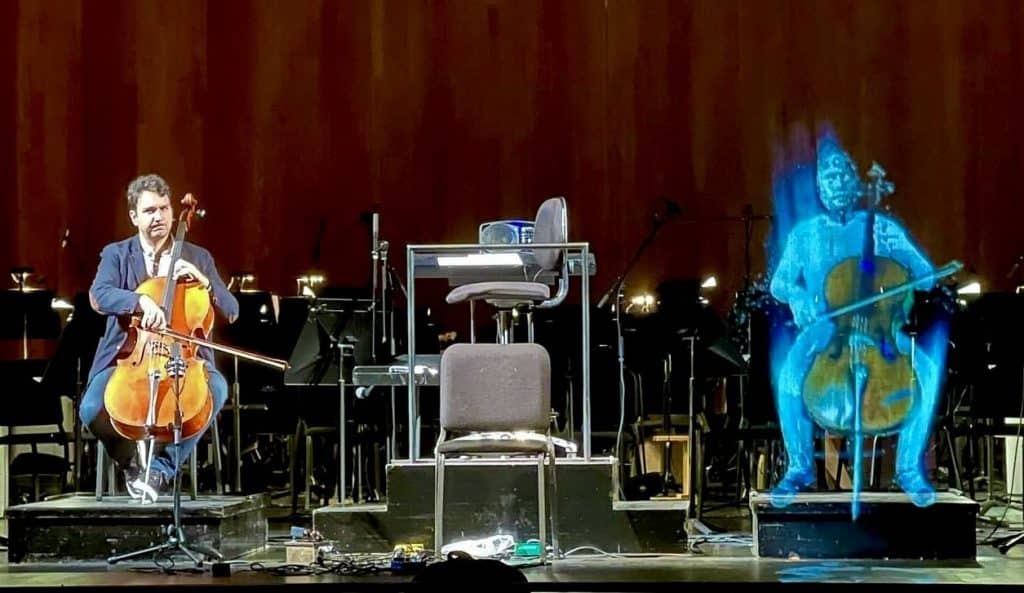

The holographic cellist in Automation: Concerto for Human and AI Cellists, performing a part composed by a machine learning algorithm.

Photo: Courtesy of Yves Dhar

The holographic cellist in Automation: Concerto for Human and AI Cellists, performing a part composed by a machine learning algorithm.

Photo: Courtesy of Yves Dhar

Data entry is crucial to machine learning, so Adam chose model scores with purpose: “We fed it Picture Studies, American Symphony, and my percussion concerto Losing Earth—three of my large- scale works, so it could learn about my voice, my color, my instrumentation, my orchestration,” he told me. “At the very end, we fed it my solo cello piece Ayudame, so it could learn how to write for cello.”

After four months of learning, analyzing, and modeling every aspect of those scores, A.G.N.E.S. was ready to generate original content. Adam, Kathryn, and Ghassan prompted A.G.N.E.S. with the human cellist line—my part— that Adam had by then written for Automation. The results were disappoint- ing. “The output was square and basic,” Adam said, “almost all eighth notes with little variety.”

Kathryn and Ghassan explained how the algorithm works as if we were beginning students: “A.G.N.E.S. is actually two competing networks. The generator tries to pass off ‘fake’ music that it makes, and the discriminator tries to catch the ‘fake’ music and deem it bad.” By letting this cycle play out over more time, both networks drastically improve the quality of the music generated. Sure enough, after two more weeks, A.G.N.E.S. had generated hundreds of measures with a dizzying array of rhythms, intervals, and registers.

On first listen to A.G.N.E.S.’s output, we were both amazed at its ability yet dumbfounded by how odd it sounded. A.G.N.E.S. definitely had its own writing style. It sounded nothing like the ear- catching melodies, lush harmonies, and breathtaking colors of Adam’s scores that it had so scrupulously analyzed and modeled. In fact, A.G.N.E.S.’s music—crafted so deftly, yet head-shakingly cold—teetered right on that line: was it written by a human or was it fake? Folded within his orchestration, the A.I.-generated music stuck out so much that there was temptation for Adam to mold it to give the score a more cohesive feel. We both agreed that that very human urge to edit would defeat the purpose of contrasting human subjectivity and A.I. artifice. “I wanted to stay true to the process,” Adam said. He literally cut and pasted the number of measures needed for the A.I. second cello part and placed it into the Automation score. The computer did the rest.

With A.G.N.E.S.’s part in place as the final piece of the puzzle, the score to Automation was complete. We now needed to give A.G.N.E.S. its voice. How would the A.I. cellist sound onstage? To give A.G.N.E.S. a “humanoid” feel (as cello-like as possible, but still robotic), we opted for me to pre-record the A.I.- generated music on a real cello and process the sound through synthesizers. Adam recruited sound designer Alex Brinkley and synth programmer Gabriel Bethke to assist him in this transformation.

There was just one problem with this plan; some of A.G.N.E.S.’s music was not humanly possible to play. By the end of what we call “Battle Mode”— the movement when A.G.N.E.S. and I try to one-up each other atop a frenetic orchestra, with it finally surpassing me in technical ability—A.G.N.E.S. wrote a perpetual motion of dissonant leaping intervals at breakneck speed. In order to record this virtuosic A.I. cadenza, we would need to split the passage into small chunks and record at half speed.

The audio-engineering wizardry results in a heart-pumping sonic climax that leaves the audience gasping for air when orchestra, lights, and A.G.N.E.S. “short-circuit” into blackness onstage.

“I see dead people.” —Cole Sear, in The Sixth Sense

While Adam, Kathryn, and Ghassan tended to A.G.N.E.S.’s composition lessons, I continued to tackle how to create the hologram that would bring A.G.N.E.S. to life onstage. Unsure of where to start looking, I drew inspiration from the late gangsta-rap icon Tupac Shakur— not his music, but his fabled back-from- the-dead performance at the Coachella music festival in 2012. Tupac died in 1996, but the entertainment company AV Concepts brought him back to life using holographic projections. Tupac’s resur- rection was a modern version of those blue-green ghosts we saw as kids in haunted houses. Pepper’s Ghost, a nine- teenth-century illusion technique still widely used today, is simple enough: it involves bouncing light off of glass to reflect a floating image onstage. But for Tupac’s headline act, AV Concepts needed to build a special stage with massive panels of angled glass. Factor in complex video editing and computer-generated imagery (C.G.I.) and the bill came out to nearly $400,000. Neither transportable nor affordable, A.G.N.E.S. would not be getting the Tupac treatment.

It was November 2020, in the thick of the pandemic, when the media reported yet more dead celebrities coming back to life. The majority of us were sheltering in place, but Kim Kardashian was throwing a lavish birthday party on a private island. With the help of U.K.-based hologram company Kaleida, then-husband Kanye West surprised her with a priceless gift: a life-like holographic projection of her late father. A cold call to Kaleida co-founder Daniel Reynolds gave us the breakthrough we needed. Kaleida manufactures the Holonet, a semi- transparent foldable scrim that makes two-dimensional video cast from a high-definition projector appear three- dimensional and floating.

“So, what does A.G.N.E.S. look like?”

That question caught me off-guard. I was on a Zoom call with Mauricio Ceppi, founder of the visual collective Funktaxi 1533, and Ryan Wise, the wizard who runs the motion graphics firm Dasystem. These two video artists would be my ambassadors to the foreign land of C.G.I.

“You know, I’ve been so caught up in how to project the hologram, I never really gave A.G.N.E.S. a face,” I replied.

“You’re gonna need some sketches, a storyboard, a timeline, some detailed descriptions, bro,” said Maurice.

Lost in the sonic realm of the musician, I’d failed to consider that I’d need these filmmaking tools, too. Truth is, Adam and I had taken our opening cues from cinema. With help from his Hollywood scriptwriter wife, Janine Salinas Schoenberg, we had even written a simple plot to help define the form of the concerto: Lonely and curious, a human cellist builds an A.I. alter-ego. The machine learns from him, at first in slow call-and-response, but then exponentially fast. Eventually, they battle and the A.I. cellist exceeds the human’s ability, leaving him to question the purpose of his existence.

Lost, he digs deep, asks what it means to be human and redeems himself by looking back at tradition, embracing imperfection, and indulging in beauty and heart-on-sleeve emotion. A.I. returns briefly at the end, to remind the human that he must co-exist with its perfectionism moving forward. With plot, characters, and electronic soundtracks to mix in with the orchestra, C.G.I., and visual effects, was this music or cinema? It sure felt like we were making a short film.

Amid the complexities of green screens, blue suits, motion tracking, time codes, and Adobe After Effects—all things used to make Marvel superheroes, and that this humble human cellist knew nothing about—even I began to wonder if we had gone too far. A.I.-generated composition was already a tough pill to swallow. Would the Beethoven superfans protest these cinematic pyrotechnics? Had I betrayed my vow to keep Adam’s breathtaking score the main feature, with projections solely acting as visual aids?

Dhar in pre-production for Automation

Photo: Courtesy of Yves Dhar

Dhar in pre-production for Automation

Photo: Courtesy of Yves Dhar

“Halldoro-what?”

In search of dystopian sounds to fill the world of Automation, Adam had dived deep into electronic musical inventions. He had discovered the Halldorophone, a cello-like, electro-acoustic instrument that creates gnarly sounds through a feedback loop, and which had been featured in Hildur Guðnadóttir’s Oscar-winning score to Joker and HBO’s Chernobyl.

Any cellist can tell you that flying with their instrument to an engagement is never fun. The prospect of traveling with two large instruments, along with the Holonet and other gear, seemed preposterous. Didn’t we already have so many moving parts with A.G.N.E.S., synth programming, and holographic projections? “There’s just something so mesmerizing about it,” Adam insisted. “It just draws you in.” He was right. Its otherworldly vibrations penetrate your soul.

Primarily featured in film scores or in solo appearances, this revelation of an instrument would make its world premiere onstage with orchestra with Automation.

Adam and I had decided to spotlight its grumbling frequency beats, harmonic overtones, and distortion with a visceral three-minute improvisation at the heart of the piece. In near-absolute darkness after the climax of “Battle Mode,” and with its feedback loop introducing an uncontrol- lable element, the Halldorophone solo captures the distraught, lost, and frail psyche of the human cellist upon learning of its technical inferiority.

Against the cacophony of these raw sounds, I imagined a backdrop of the purest strains of the orchestra sneaking back in as a guiding light. I asked Adam to write a quasi-Baroque string chorale full of heartfelt suspensions and, in one evening, he delivered the framework.

I was up at 3 A.M. tending to my now toddler son when I opened my email to find an audio clip. What I heard out of those unforgiving iPhone speakers made me weep. Adam had written a chorale so beautiful, so angelic, that it was on par with Barber’s and Albinoni’s beloved Adagios. The transition from the growlings of the Halldorophone to the serenity of the chorale is like a musical redemption— dare I say resurrection?—unlike any I had experienced in performance before.

Adam Schoenberg

Photo: Sam Zauscher

Adam Schoenberg

Photo: Sam Zauscher

A.G.N.E.S. comes to life

With all the blood, sweat, and code shed in nearly five years of production, Adam and I needed the right conductor and orchestra to premiere this ground- breaking work. Teddy Abrams, the young maverick maestro who would be named Musical America’s 2022 Conductor of the Year, was our dream fit. A prolific composer himself, a champion of works new and old, he would be a terrific steward for our risk-taking production.

On May 15, 2022, we performed the world premiere of Automation with the Louisville Orchestra to a packed house.

I was nervous, not so much for my impending performance but more for the work’s reception. Would the audience like it? Was it worth all the trouble? I was particularly nervous for Adam, that his newest work be presented in the best light possible. The intrigue in the hall was palpable. Teddy was crisp with the baton. The orchestra was electric. A.G.N.E.S. was incandescent, floating, and menacing in sound. I emptied my emotional tank, even letting loose an unplanned scream where Adam’s score had called for me to make a lot of noise. But it wasn’t until the rousing ovations that got Adam to join me and A.G.N.E.S. (who takes holographic bows) for a second curtain call that I knew we’d created something special. It was icing on the cake when Annette Skaggs, longtime local critic, wrote, “Automation is perhaps one of the best new pieces that have been performed by our Orchestra in many years. The piece was like a rollercoaster ride I didn’t want to end. Stunning from first note to last. I am still craving to hear more.”

What started out as a questioning of the classical concert experience transformed into a referendum on the use of A.I. in classical music. In booking Automation for future seasons, I have found that the two ideas are linked. One Grammy-winning music director of a top-tier U.S. orchestra told me, “Adam’s music is great. Tell him to rewrite the second cello part for another human cellist. Then I’ll think about programming it.” At the League of American Orchestras National Conference this past June, a forward-thinking executive director of another major orchestra exclaimed, “This is cool! I think our audiences would love the hologram, the A.I., the whole experience. But is the music any good?”

In my many interactions, I’ve come to notice that those who wish to preserve the old classical traditions fear A.G.N.E.S. the most and dismiss Automation as gimmick. Others who wish to reinvent the concert experience and push our beloved artform forward support A.I. as a tool, and embrace the concerto as a bold new expression.

Whatever anyone’s taste, it is my sincere hope that Automation spurs lively debate about both A.I. and the classical concert experience. Automation was never about making a statement about A.I. Instead, our production asks all the existential questions that A.I. poses today: Can a technically superior machine with no emotions make music that moves us? Is it a tool to help humans reach new heights? Will it replace us?

But along the five years of production full of twists, turns, and pandemic pause, I learned far more about what it really means to be human. It means taking risks. It means appreciating beauty in any form. It means taking on challenges and making mistakes. It means getting lost in a journey full of doubts and having faith you’ll find yourself again.

Ultimately, it means connecting with others by whatever means possible. A.G.N.E.S. and its A.I. might be polarizing, and the Halldorophone and its beautiful “noise” might not be for everyone. Yet, when I play each soaring theme or dazzling arpeggio of Adam’s moving score in the face of those digital inventions, I can’t help but feel that much more alive and human.

Besides, isn’t it cool to see a hologram battling a human cellist onstage?