AI: What Does It Mean? And How Is It Making These Decisions?

Tod Machover, a pioneer of the connections between classical music and computers, considers the history, promise, and dangers of artificial intelligence.

Sitting at his home in Waltham, Massachusetts, the composer Tod Machover speaks with the energy of someone half his 69 years as he reflects on the evolution of digital technology toward the current boom in artificial intelligence. “I think the other time when things moved really quickly was 1984,” he says—the year when the personal computer came out. Yet he sees this moment as distinct. “What’s going on in A.I. is like a major, major difference, conceptually, in how we think about music and who can make it.”

Perhaps no other figure is better poised than Machover to analyze A.I.’s practical and ethical challenges. The son of a pianist and computer graphics pioneer, he has been probing the interface of classical music and computer programming since the 1970s. As the first Director of Musical Research at the then freshly opened Institut de Recherche et Coordination Acoustique/Musique (I.R.C.A.M.) in Paris, he was charged with exploring the possibilities of what became the first digital synthesizer while working closely alongside Pierre Boulez. In 1987, Machover introduced Hyperinstruments for the first time in his chamber opera VALIS, a commission from the Pompidou Center in Paris. This technology incorporates innovative sensors and A.I. software to analyze the expression of performers, allowing changes in articulation and phrasing to turn, in the case of VALIS, keyboard and percussion soloists into multiple layers of carefully controlled sound. Machover had helped to launch the M.I.T. Media Lab two years earlier in 1985, and now serves as both Muriel R. Cooper Professor of Music and Media and director of the Lab’s Opera of the Future group.

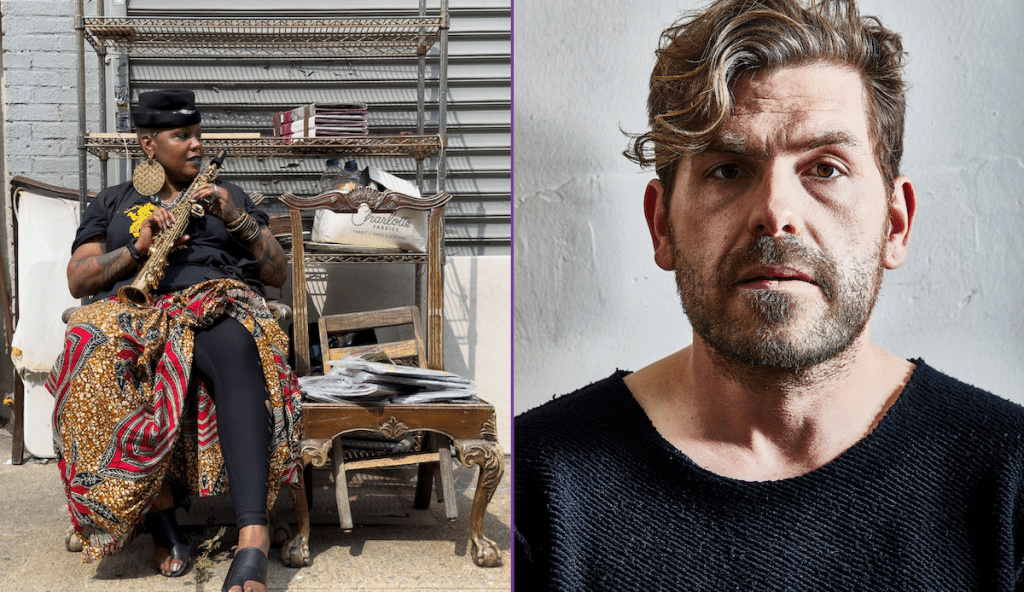

Tod Machover

Photo: Sam Odgden

Tod Machover

Photo: Sam Odgden

Machover has long pursued his interest in using technology to involve amateurs in musical processes. His 2002 Toy Symphony allows children to shape a composition, among other things, by means of “beat bugs” that generate rhythms. This work, in turn, spawned the Fisher-Price toy Symphony Painter and has been customized to help the disabled imagine their own compositions.

We spoke via Zoom about the arc of his innovations, and what recent developments imply about the act of making music.

How is the use of A.I. a natural development from what you began back in the 1970s, and what is different?

In terms of big history, I think the other time when things moved really quickly was 1984—literally, the year when the first affordable personal computer came out. Everybody could have a pretty powerful machine at home. John Chowning at Stanford had developed something called frequency modulation (F.M.), which turned out to be an incredibly efficient way of creating sound and a very intuitive way for musicians to understand how to manipulate it. Yamaha licensed the patent and made this instrument called the DX7, in May 1983.

The third thing that happened around that same time was that, like a miracle, all of the computer companies and music instrument companies decided to create a standard for all of these computers and digital instruments to talk together. And that was called M.I.D.I., or Musical Instrument Digital Interface. So, everybody could have a powerful music generation instrument, and everybody could have a way of controlling these instruments by computer.

How is the use of A.I. a natural development from what you began back in the 1970s, and what is different?

There are lots of things that could only be done with physical instruments 30 years ago that are now done in software: you can create amazing things on a laptop. But what’s going on in A.I. is like a major, major difference, conceptually, in how we think about music and who can make it.

One of my mentors and heroes is Marvin Minsky, who was one of the founders of A.I., and a kind of music prodigy. And his dream for A.I. was to really figure out how the mind works. He wrote a famous book called The Society of Mind in the mid-eighties based on an incredibly radical, really beautiful theory: that your mind is a group of committees that get together to solve simple problems, with a very precise description of how that works. He wanted a full explanation of how we feel, how we think, how we create— and to build computers modeled on that.

Little by little, A.I. moved away from that dream, and instead of actually modeling what people do, started looking for techniques that create what people do without following the processes at all. A lot of systems in the 1980 and 1990s were based on pretty simple rules for a particular kind of problem, like medical diagnosis. You could do a pretty good job of finding out some similarities in pathology in order to diagnose some- thing. But that system could never figure out how to walk across the street without getting hit by a car. It had no general knowledge of the world.

We spent a lot of time in the seventies, eighties, and nineties trying to figure out how we listen—what goes on in the brain when you hear music, how you can have a machine listen to an instrument—to know how to respond. A lot of the systems which are coming out now don’t do that at all. They don’t pretend to be brains. Some of the most kind of powerful systems right now, especially ones generating really crazy and interesting stuff, look at pictures of the sound—a spectrogram, a kind of image processing. I think it’s going to reach a limit because it doesn’t have any real knowledge of what’s there. So, there’s a question of, what does it mean and how is it making these decisions?

What systems have you used successfully in your work?

One is R.A.V.E., which comes from I.R.C.A.M. and was originally developed to analyze audio, especially live audio, so that you can reconstruct and manipulate it. The voice is a really good example. Ever since the 1950s, people have been doing live processing of singing. The problem is that it’s really hard to analyze everything that’s in the voice: The pitch and spectrum are changing all the time.

What you really want to do is be able to understand what’s in the voice, pull it apart and then have all the separate elements so that you can tune and tweak things differently on the other side. And that’s what R.A.V.E. was invented to do. It’s an A.I. analysis of an acoustic signal. It reconstructs it in some form, and then ideally it comes out the other side sounding exactly like it did originally, but now it’s got all these handles so that I can change the pitch without changing the timbre. And it works pretty well for that. You can

have it as an accompanist, or your own voice can accompany you. It can change pitch and sing along. And it can sing things that you never sang because it understands your voice.

I’m now updating the Brain Opera using R.A.V.E. We did a version in October with live performers. For that particular project, I wanted a kind of world of sounds that started out being voice-like, and ended up being instrumental-like, and everywhere in between. The great thing about A.I. models now is that you can use them not just to make a variation in the sound, but also a variation in what’s being played. So, if you think about early electronic music serving to kind of color a sound—or add a kind of texture around the sound, but being fairly static—with this, if you tweak it properly, it’s a kind of complex variation closely connected to what comes in but not exactly the same. And it changes all the time, because every second the A.I. is trying to figure out:

How am I going to match this? How far am I going to go? Where in the space am I? You can think of it as a really rich way of transforming something or creating a kind of dialogue with the performer.

And you’re manipulating material yourself rather than just feeding the algorithm and seeing what comes out the other end, right?

Yes. We use some existing models but also build our own sometimes. We’re working hard right now to build models where you can customize every aspect, and where customizing is easy enough that you don’t have to be an M.I.T. graduate student. Most models now are kind of like black boxes. What comes out is a big surprise. That’s amazing, but it’s hard to personalize them to the degree you’d want to, although we do a pretty good job with R.A.V.E.

The next models are called diffusion models. I find they work best if you’re building up a library of sounds. For instance, for this first version of Overstory that we did in New York in March, where I was trying to build the sound of a forest with all its variety, I could have done it by hand. I made a lot of recordings out in the woods. I also made a lot of sounds from scratch in my studio. We live on an 18th-century farm here, and so we have an old barn that is filled with wood. I spent a day scraping and had lots of recordings. I fed all of those into a model, and from that we made spectrograms. And then with the spectrograms, you could go in and say, make the frequency higher, or more percussive or denser. You can give it words, or actual frequency information. And then it transformed these sounds. You could make many variations, which were really interesting but still in the family, and many of them would have

taken a lot of time to do by hand with studio techniques. I wanted a language like trees that communicate under- ground, and it worked really well for that.

For commencement at M.I.T., I created a segment for my City Symphony for Boston. We have this incredible mayor [Michelle Wu]. She’s really shaking everything up; she played Mozart’s 21st Piano Concerto with the Boston Symphony Orchestra under Andris Nelsons. I ran the Mozart and the sounds of Boston through this diffusion model. And in this three-and-a-half minutes, there were these incredibly interesting hybrids. I’m pretty imaginative, but I wouldn’t necessarily have thought of these particular cross-pollinations of Fenway Park and a piano solo, or the sound of traffic and the orchestra.

The other thing I’m really interested in—and I think that this technology will allow us to do it—is to create a piece of music where I would take all of the material from a composition and break it apart: Here’s the baseline. Here’s a chord. Here’s a texture. And say, here’s a toolkit with a thousand elements; you can take them apart and put them together the way you want, like LEGO bricks. What’s even more interesting is to put them in a form where this A.I. system, let’s call it A.I. radio, can actually enable switching channels: With just a few dials, you might be able to change the way the piece develops. Every time, the A.I. system makes it play out a bit differently.

One term we use in computer programming is a “branch.” So, you can imagine a piece of music where the A.I. system says, now it’s going to grow in this direction or that direction. The possibility of having this core of material that develops differently each time is very real. Maybe the tempo is different, or

the intensity, or the sound of the orchestration. The added dimension is that it’s quite possible to add a few dials to this A.I. radio, so that if you’re listening, you could be the one who says, “I’m kind of anxious today. I’d really like to hear a version which is as chill as you can make it.” Or “I want to hear a version that is as intense and as wild as possible.” You can imagine a bunch of variables that would be interesting and intuitive, but all related to that piece, not just random.

Does all of this raise copyright issues?

There are enormous problems with sucking in other people’s music. And the crazy thing about these A.I. models, of course, is that it’s almost impossible now to track what’s in them. It’s much more complicated than sampling because most of the legal cases ask: “Can you tell what the sample was? Can you audibly hear it? Is it fair use, or is it ripping off someone’s idea?” In these A.I. models, there are thousands and thousands of bits of audio. Once they turn into a model, and then start playing things back, either live or with these various prompts, right now it’s impossible for an outsider to go back and trace what’s in there. So, if they’ve taken my entire oeuvre, let’s say, and put it into

someone else’s model, and it’s generating something that sounds kind of like my music, that’s really dangerous. It’s likely that fairly soon there will be ways of tracing what goes into a model. Maybe you will have to tag material when you create it. In fact, it’s already happening. For our admissions applications to the media lab this year, we were not sure if some were written by a machine. I’m assuming that by next year a place like M.I.T. will have to have some way of tracing whether a human being wrote something. It’s a really odd moment.

How could A.I. help support your endeavor to facilitate amateur creativity?

One thing I’ve spent my career doing is trying to reduce some of the barriers for somebody who didn’t take years of lessons or isn’t naturally gifted to be part of a musical experience. You can do that by making Guitar Hero, or you can do that with Hyperscore, where you can draw music. We’re very careful to try to ensure that the activity you’re doing is meaningful— that you’re not being led to think that you’re doing something you’re not really: You’re getting closer to the music and, hopefully, with something like Hyperscore, where you’re drawing your own composition, it really allows you to express something you care about.

The problem with these systems is that they are already full of music, and there is no barrier for pushing a button and saying, “Oh, make me something.” And so the question is, what is a person doing? You could do an enormous amount without really putting much of yourself into it. And is that what we want?

I’ve invested a lot of time in trying to make it possible for people to interact meaningfully and creatively with music. Now our machines are producing all this amazing stuff, and they don’t have any music training. The machines don’t really have any knowledge about how it works—what harmony is, or what rhythm is. And maybe that’s not a good thing. We need to put context and knowledge back into these systems before they become so prevalent and so closed off that you can’t shape them anymore. So, we’re trying to work really fast.

The more serious issue is that machines don’t care about anything: The only reason music matters is because it’s a way of a human being reaching out to somebody else and saying, this is something that I’ve observed or felt, or something that I’m thinking. It has meaning because it’s related to my life, or to yours. A machine has no investment like that, and it never will. And if we can’t build these systems so that I can shape them—or anybody can shape them with something they care about— then it’s dangerous. But I think that something really powerful is happening.